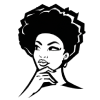

I reviewed research on relaxing landscapes, which led to savannah and underwater settings.

Developing Two Virtual-Reality Environments and Detecting Emotions with AI

- Featured

- VR

- AI

Introduction

What was this project about?

This project was my master thesis. It explored whether calming VR experiences could help people experiencing cognitive decline feel more relaxed and perform better on cognitive tasks.

My Role

I developed two VR environments and contributed to the full research process, from early research, VR design, cognitive test development, EEG data collection and preprocessing, feature extraction, machine learning model training (AI), and scientific paper writing.

Technical Details

VR Development: Unity, C#

Cognitive Tests: Vanilla JavaScript

Dataset: Raw Brain Signals (EEG)

AI & Preprocessing: MATLAB, EEGLAB, Python, Scikit-learn, TensorFlow/Keras.

Timeline

2019-2020

TL;DR

Problem

People experiencing cognitive decline may also experience negative emotions such as frustration, stress, and confusion.

- Negative emotions can affect well-being, decision-making, and cognitive performance.

- Could VR immersion help with relaxation and cognitive performance?

Solution

I developed two relaxing VR environments and an AI model for detecting confusion from brain signals.

- I developed two relaxing VR environments.

- I developed an AI model that could detect confusion from brain-signal data (EEG), with the long-term goal of making the VR experience adapt to how the participant feels.

- We measured the participants' negative emotions before, during, and after the VR session.

information

Process

I developed two VR environments in Unity. Features included NPC pathfinding and a Goal-Oriented Action Planning system. I made the environments adaptable, with lighting, sound volume, trees, animals, sky color, and environment colors modifiable in real time.

I built cognitive tests in JavaScript, then collected EEG data while participants completed them and reported their confusion level after each exercise.

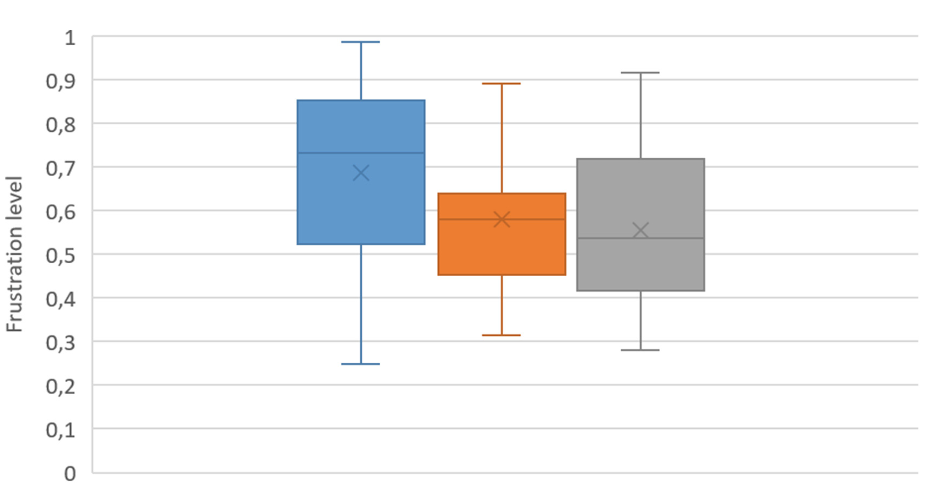

I cleaned EEG signals with MATLAB and EEGLAB, removed artifacts with filtering and ICA (e.g., eye movements, muscle activity), then extracted FFT and band-power features.

I trained and compared KNN, SVM, and LSTM AI models to detect confusion from brain-signal data.

Impact

VR relaxation

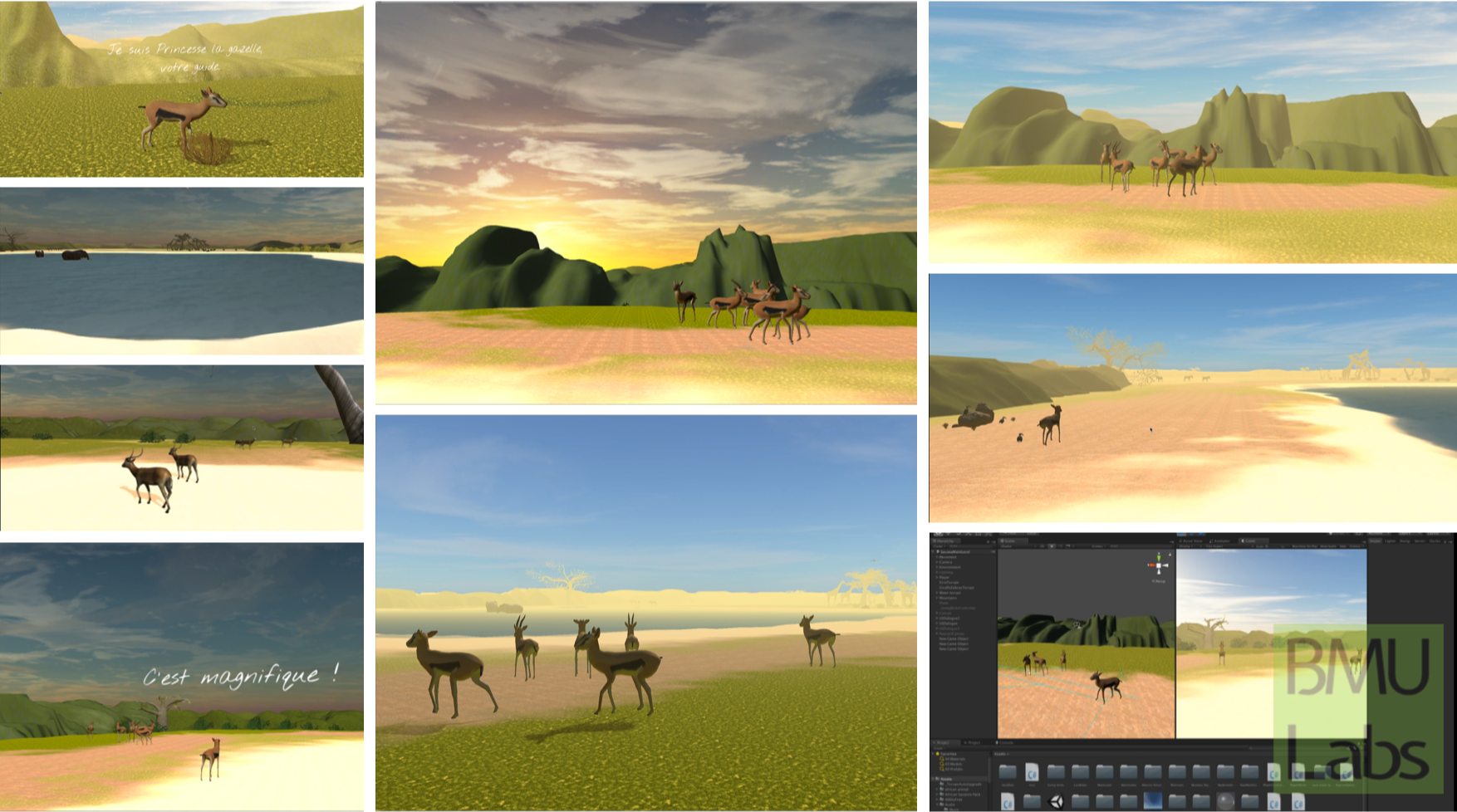

Preliminary results showed that participants’ frustration decreased during the Savannah VR experience, and that this positive effect was still observed after the immersion.

78.6%

accuracy reached by the best AI model when detecting a confused vs. unconfused state from brain-signal data.

Outreach

This work led to my master thesis, a paper on Savannah VR, and a paper on AI-based confusion detection.